TL;DR

Anthropic has launched a new training framework named Constitutional AI, designed to embed explicit principles into AI models. This approach aims to improve AI alignment and safety. Details on implementation and impact are still emerging.

Anthropic has introduced a new training framework called Constitutional AI, which aims to embed explicit principles into AI models to improve safety and alignment. This development is significant as it represents a strategic shift toward more transparent and controllable AI systems.

Anthropic’s Constitutional AI framework was publicly announced in March 2024. The approach involves training AI models with a set of predefined principles or ‘constitutions’ that guide their responses and decision-making processes. According to Anthropic, this method seeks to address longstanding concerns about AI safety by making model behavior more predictable and aligned with human values.

While specific technical details remain limited, Anthropic states that the framework allows for the creation of customizable ‘constitutions’ that can be tailored to different use cases or ethical standards. The company emphasizes that this approach is designed to enhance the robustness and reliability of AI outputs, especially in sensitive or high-stakes applications.

Why It Matters

This development is important because it could influence how AI models are trained and aligned in the future. By explicitly encoding principles, developers may be able to reduce unintended behaviors and improve user trust. It also signals a proactive effort by AI companies to address safety concerns through innovative training methods, potentially setting a new industry standard.

AI safety and alignment training tools

As an affiliate, we earn on qualifying purchases.

As an affiliate, we earn on qualifying purchases.

Background

Anthropic, founded in 2021 by former OpenAI executives, has been focused on AI safety and alignment. Their previous work includes developing language models with safety features. The launch of Constitutional AI builds on broader industry efforts to create more controllable and transparent AI systems, following increased regulatory and public scrutiny of AI safety issues.

“Constitutional AI represents a new paradigm in aligning AI systems with human values through explicit principles embedded during training.”

— Dario Amodei, CEO of Anthropic

“Our goal with Constitutional AI is to create more predictable, safe, and aligned models by formalizing ethical guidelines into the training process.”

— Anthropic spokesperson

The AI Fairness Diagnostic Kit: From Principle to Practice in No-Code AI Fairness Auditing

As an affiliate, we earn on qualifying purchases.

As an affiliate, we earn on qualifying purchases.

What Remains Unclear

It is not yet clear how widely Anthropic plans to implement the Constitutional AI framework across its models or how it compares in effectiveness to other safety approaches. Technical specifics and real-world testing results are still forthcoming.

A Decentralized and Privacy-Preserving Framework for AI Model Training in Healthcare: Integrating Federated Learning and Blockchain

As an affiliate, we earn on qualifying purchases.

As an affiliate, we earn on qualifying purchases.

What’s Next

Next steps include further testing and refinement of the Constitutional AI framework, with potential rollout across more models. Industry observers will be watching for peer adoption and regulatory responses. Anthropic may also publish detailed technical papers in the coming months.

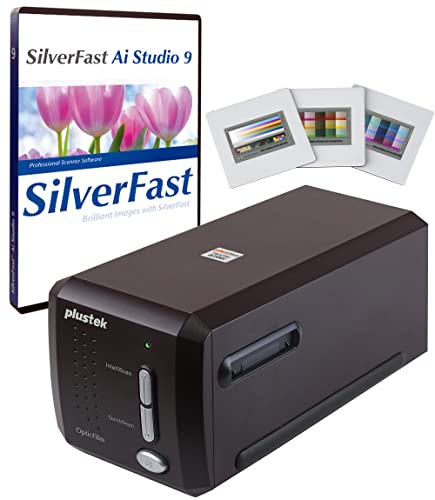

Plustek OpticFilm 8300i Ai Film Scanner – Converts 35mm Film & Slide into Digital, Bundle SilverFast Ai Studio 9 + QuickScan Plus, Include Advanced IT8 Calibration Target (3 Slide)

[NewlyLaunched] OpticFilm 8300i Ai equipped with new generation of chip, which increase by 38% scan speed compared to…

As an affiliate, we earn on qualifying purchases.

As an affiliate, we earn on qualifying purchases.

Key Questions

What is Constitutional AI?

Constitutional AI is a training approach where models are guided by explicit principles or ‘constitutions’ to improve safety, alignment, and predictability.

How does this differ from traditional AI training?

Unlike conventional methods that rely on large datasets without explicit guiding principles, Constitutional AI incorporates predefined ethical or operational guidelines into the training process itself.

Will this framework be available for other companies to use?

Details are not yet confirmed, but Anthropic has indicated that the framework could be adaptable or open for broader industry use in the future.

What are the potential risks or limitations?

As with any new approach, the effectiveness and safety of Constitutional AI depend on the quality of the principles encoded and how well they generalize across contexts. Further testing is needed.

![[AINews] Anthropic-SpaceXai's 300MW/$5B/yr deal for Colossus I, ARR growth is 8000% annualized](https://artificialintelligencemax.com/wp-content/uploads/2026/05/ainews-anthropic-spacexai-s-300mw-5b-yr-deal-for-colossus-i-arr-growth-is-8000-a-featured-200x110.jpg)

![[AINews] Anthropic-SpaceXai's 300MW/$5B/yr deal for Colossus I, ARR growth is 8000% annualized](https://artificialintelligencemax.com/wp-content/uploads/2026/05/ainews-anthropic-spacexai-s-300mw-5b-yr-deal-for-colossus-i-arr-growth-is-8000-a-featured-260x140.jpg)